publications

2026

- PLURULE: A Challenging Benchmark for Detecting Rule Violations on Pluralistic Social MediaZoher Kachwala , Bao Tran Truong , Rasika Muralidharan , and 3 more authorsIn Proceedings of the 64th Annual Meeting of the Association for Computational Linguistics , 2026

Social media are shifting towards pluralism - community-governed platforms where groups define their own norms. What violates rules in one community may be perfectly acceptable in another. Can AI models help detect rule violations of such pluralistic communities? We formalize the task as a multiple-choice problem, mirroring how human moderators operate in the real world: given a comment and its surrounding context, identify which specific rule, if any, is violated. We introduce PLURULE, a multimodal, multilingual benchmark for detecting 17,313 rule violations across 2,419 Reddit communities spanning 3,692 pluralistic rules in 10 languages. Using this benchmark, we show that state-of-the-art vision-language models struggle significantly: even GPT-5.2 with high reasoning performs only slightly better than a trivial baseline. We also find that bigger models and increased context provide marginal gains, and universal rules like civility and self-promotion are easier to detect. Our results show that pluralistic moderation of social media is a fundamental challenge for language models.

@inproceedings{kachwala2026plurule, title = {PLURULE: A Challenging Benchmark for Detecting Rule Violations on Pluralistic Social Media}, author = {Kachwala, Zoher and Truong, Bao Tran and Muralidharan, Rasika and Kwak, Haewoon and An, Jisun and Menczer, Filippo}, year = {2026}, booktitle = {Proceedings of the 64th Annual Meeting of the Association for Computational Linguistics}, publisher = {Association for Computational Linguistics}, }

2025

-

Prefilled responses enhance zero-shot detection of AI-generated imagesZoher Kachwala , Danishjeet Singh , Danielle Yang , and 1 more author2025NeurIPS 2025 Workshop; Under Review - ACL ARR

Prefilled responses enhance zero-shot detection of AI-generated imagesZoher Kachwala , Danishjeet Singh , Danielle Yang , and 1 more author2025NeurIPS 2025 Workshop; Under Review - ACL ARRTraditional supervised methods for detecting AI-generated images depend on large, curated datasets for training and fail to generalize to novel, out-of-domain image generators. As an alternative, we explore pre-trained Vision-Language Models (VLMs) for zero-shot detection of AI-generated images. We evaluate VLM performance on three diverse benchmarks encompassing synthetic images of human faces, objects, and animals produced by 16 different state-of-the-art image generators. While off-the-shelf VLMs perform poorly on these datasets, we find that their reasoning can be guided effectively through a simple prefilling of responses - a method we call Prefill-Guided Thinking (PGT). In particular, prefilling a VLM response with the phrase ’Let’s examine the style and the synthesis artifacts’ improves the Macro F1 scores of three widely used open-source VLMs by up to 24%. We analyze this improvement by tracking models’ answer confidence at incremental intervals during response generation. For some models, prefills counteract early overconfidence - akin to mitigating the Dunning-Kruger effect - leading to better detection performance.

@misc{kachwala2025prefilled, title = {Prefilled responses enhance zero-shot detection of AI-generated images}, author = {Kachwala, Zoher and Singh, Danishjeet and Yang, Danielle and Menczer, Filippo}, year = {2025}, eprint = {2506.11031}, archiveprefix = {arXiv}, primaryclass = {cs.LG}, url = {https://arxiv.org/abs/2506.11031}, status = {NeurIPS 2025 Workshop; Under Review – ACL ARR}, note = {NeurIPS 2025 Workshop; Under Review - ACL ARR} } - Distilling Cloze Reasoning Improves Detecting Violations on Pluralistic Social MediaZoher Kachwala , Jisun An , Haewoon Kwak , and 1 more author2025In Preparation

Large language models struggle to detect rule violations across pluralistic social media communities, where norms vary widely. We introduce DisCloze, a distillation method that teaches student models to leverage full context through high-quality reasoning traces. We generate teacher traces using task-aligned prefills (’Let’s examine the subreddit, submission, discussion...’) rather than generic chain-of-thought prompts (’Let’s think step by step’), producing structured reasoning that systematically analyzes community context before identifying violations. By distilling these context-aware traces into smaller models, DisCloze enables generalization from a subset of communities to 100+ unseen communities with distinct rule sets. Our results demonstrate that task-aligned prefill distillation outperforms generic CoT distillation for pluralistic content moderation.

@unpublished{kachwala2025discloze, title = {Distilling Cloze Reasoning Improves Detecting Violations on Pluralistic Social Media}, author = {Kachwala, Zoher and An, Jisun and Kwak, Haewoon and Menczer, Filippo}, year = {2025}, note = {In Preparation}, }

2024

-

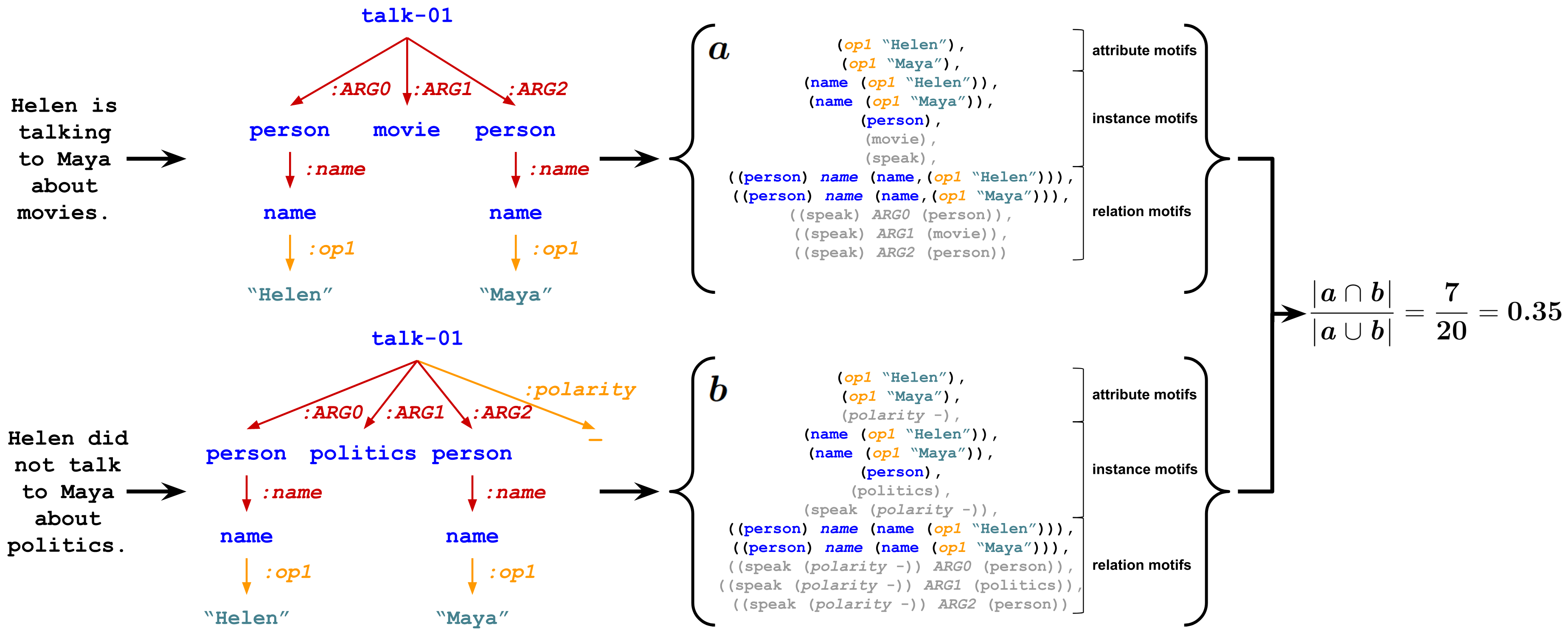

REMATCH: Robust and Efficient Matching of Local Knowledge Graphs to Improve Structural and Semantic SimilarityZoher Kachwala , Jisun An , Haewoon Kwak , and 1 more authorIn Findings of the Association for Computational Linguistics: NAACL 2024 , Jun 2024

REMATCH: Robust and Efficient Matching of Local Knowledge Graphs to Improve Structural and Semantic SimilarityZoher Kachwala , Jisun An , Haewoon Kwak , and 1 more authorIn Findings of the Association for Computational Linguistics: NAACL 2024 , Jun 2024Introduced REMATCH, a novel AMR graph evaluation metric achieving 5x speedup while ranking first in semantic similarity. Released RARE benchmark for testing AMR evaluation metrics on knowledge-grounded NLP systems.

@inproceedings{kachwala-etal-2024-rematch, title = {{REMATCH}: Robust and Efficient Matching of Local Knowledge Graphs to Improve Structural and Semantic Similarity}, author = {Kachwala, Zoher and An, Jisun and Kwak, Haewoon and Menczer, Filippo}, editor = {Duh, Kevin and Gomez, Helena and Bethard, Steven}, booktitle = {Findings of the Association for Computational Linguistics: NAACL 2024}, month = jun, year = {2024}, address = {Mexico City, Mexico}, publisher = {Association for Computational Linguistics}, url = {https://aclanthology.org/2024.findings-naacl.64/}, doi = {10.18653/v1/2024.findings-naacl.64}, pages = {1018--1028}, }

2023

-

A multi-platform collection of social media posts about the 2022 US midterm electionsRachith Aiyappa , Matthew R DeVerna , Manita Pote , and 8 more authorsIn Proceedings of the International AAAI Conference on Web and Social Media , Jun 2023

A multi-platform collection of social media posts about the 2022 US midterm electionsRachith Aiyappa , Matthew R DeVerna , Manita Pote , and 8 more authorsIn Proceedings of the International AAAI Conference on Web and Social Media , Jun 2023Released MEIU22, a multi-platform dataset with 30M posts across Twitter, Facebook, Instagram, Reddit, and 4chan tracking the 2022 U.S. Midterm Elections, alongside open-source collection pipeline.

@inproceedings{aiyappa2023multi, title = {A multi-platform collection of social media posts about the 2022 US midterm elections}, author = {Aiyappa, Rachith and DeVerna, Matthew R and Pote, Manita and Truong, Bao Tran and Zhao, Wanying and Axelrod, David and Pessianzadeh, Aria and Kachwala, Zoher and Kim, Munjung and Seckin, Ozgur Can and others}, booktitle = {Proceedings of the International AAAI Conference on Web and Social Media}, volume = {17}, pages = {981--989}, year = {2023}, doi = {10.1609/icwsm.v17i1.22205}, url = {https://ojs.aaai.org/index.php/ICWSM/article/view/22205}, } - The Inexplicable Efficacy of Language ModelsRachith Aiyappa , and Zoher KachwalaXRDS: Crossroads, The ACM Magazine for Students, Apr 2023

Educational survey introducing language modeling fundamentals and unexplained emergent capabilities of LLMs, discussing key limitations and recent approaches.

@article{aiyappa_inexplicable_2023, title = {The {Inexplicable} {Efficacy} of {Language} {Models}}, volume = {29}, issn = {1528-4972}, url = {https://dl.acm.org/doi/10.1145/3589654}, doi = {10.1145/3589654}, number = {3}, journal = {XRDS: Crossroads, The ACM Magazine for Students}, author = {Aiyappa, Rachith and Kachwala, Zoher}, month = apr, year = {2023}, pages = {60--62}, }